The number 1 improvement that I will incorporate into the project is the complete ban of AI generated material. From my prior research, I saw first hand how uncanny and unprofessional the use of AI is in a field such as this. Due to that, this project will not include: AI generated photographs, AI generated art of any kind, AI generated text (including AI virtual assistants) since none of these features are both ethical and/or sustainable.

AI generated art

AI itself is inherently not sustainable, due to the large amounts of water being used to cool the powerful servers that host it as well as the large amounts of electricity used to keep it running at all times. AI itself does not know how to create, it can only generate an output by mix and matching imagery that it finds online and distorting it to fit the prompt given to it. This results in human-made art posted online finding itself being fed to the AI algorithm. This is unethical as it can easily find itself infringing on copyright laws since the AI does not proof check if the art it is using is copyrighted.

More and more companies switch to AI generated art in order to save costs of hiring graphic designers and digital marketers, which pushes people out of possible careers. This would be the opposite of the effect that we want to see from the Participatory Collective, since their goal is to make sure the user has as much say as possible in their interactions and to nurture creativity and individualism.

AI generated photographs

This use of AI is by far the least ethical for multiple reasons. The photos that are generated are based off of real photographs of real people that are posted online, the AI does not ask for permission to use the photos and there is no way to protect yourself from your photos being fed to it’s algorithm. This makes it very easy to create deepfakes, altered photos which show a person doing an action or being in a scenario that they have not done/been in, and the worst part is that the person whose pictures were used cannot fight back unless they have legally copyrighted their face, voice, name, and or brand, which typically the average person does not do.

This created a highly unethical legal loophole, which shows the corporate coldness which we are working so hard to avoid when it comes to the Participatory Collective.

As an additional note, it feels incredibly lazy to use AI photographs, even if trying to cut down on costs of hiring a photographer it is possible to simply take a photo of your company midst work (with permission) on your phone, proving that you are a caring group of people very transparent about helping others. It can also create a false pretence, since the photograph will likely not include the realistic portrayal of how the work is being done, as well as making it very uncanny since AI will often repeat the same setting if you reuse the same prompt and make the people distorted and worrying to look at. This will also convince the user that they are not a reliable source since there are no real clients or users portrayed anywhere on their website.

AI Generated text

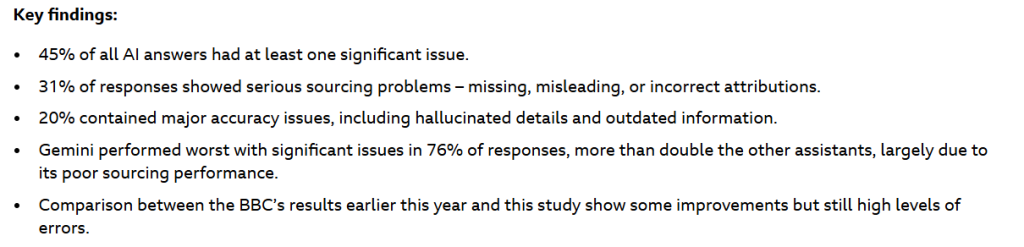

This AI feature is not as unethical as it is annoying, but at times dangerous as it can easily lie to and sabotage the user. BBC has conducted research, aiming to see how accurately AI conveys news content to its user, the results are as follows:

The entire research post can be found on their website here

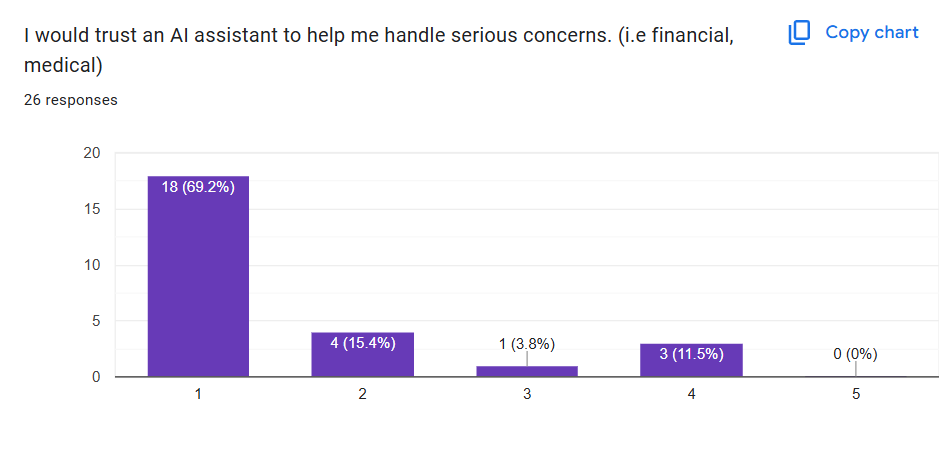

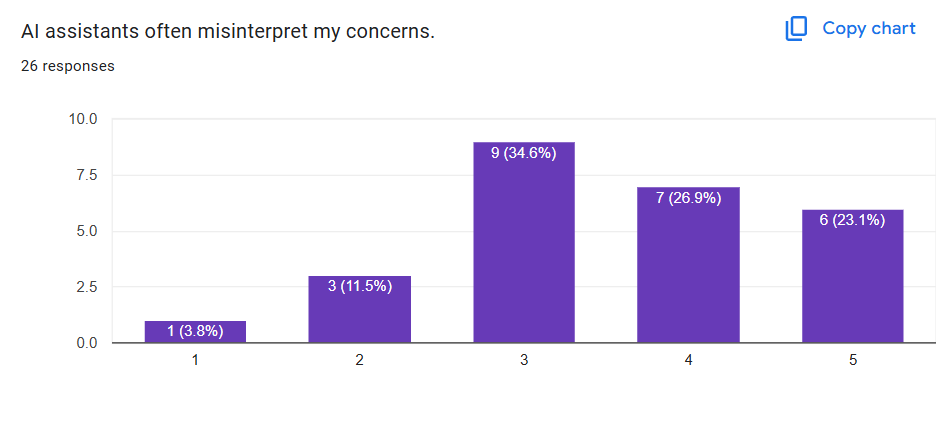

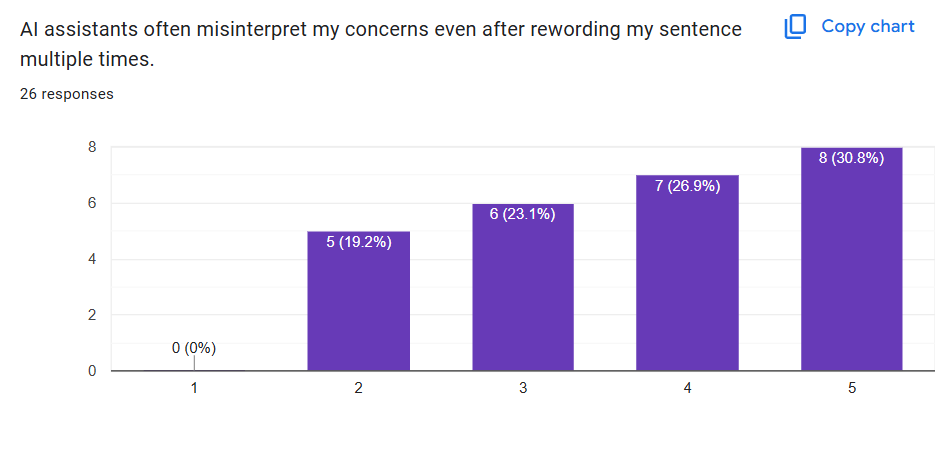

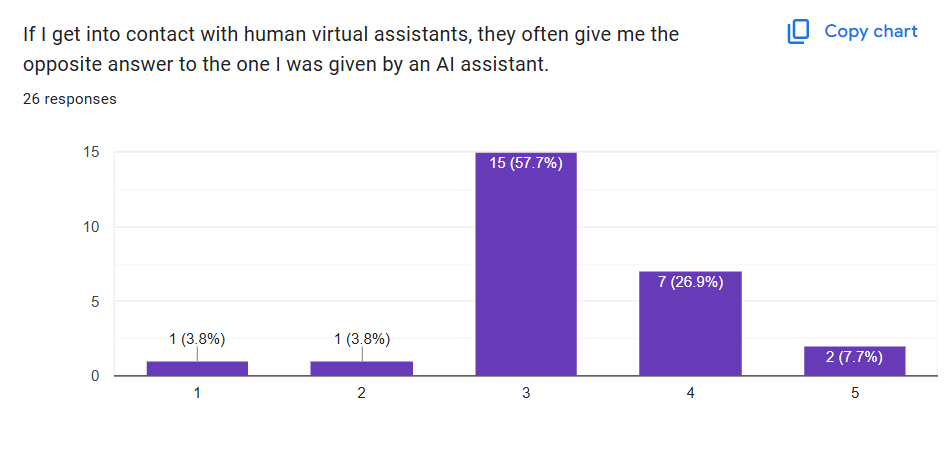

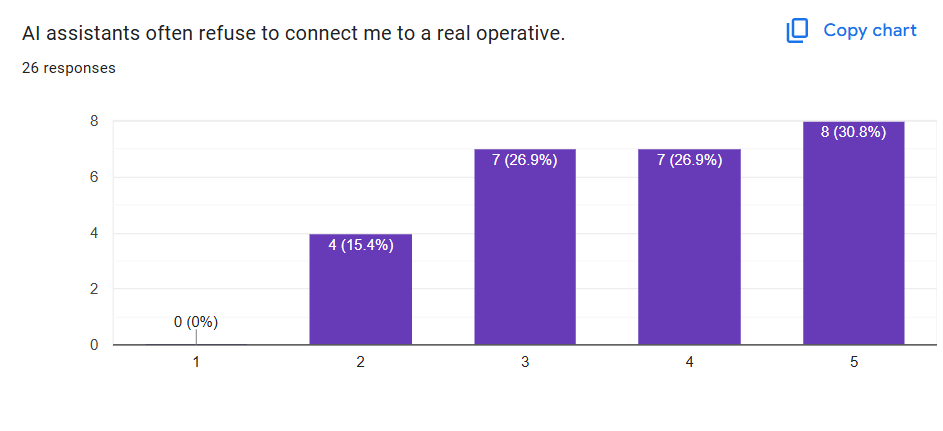

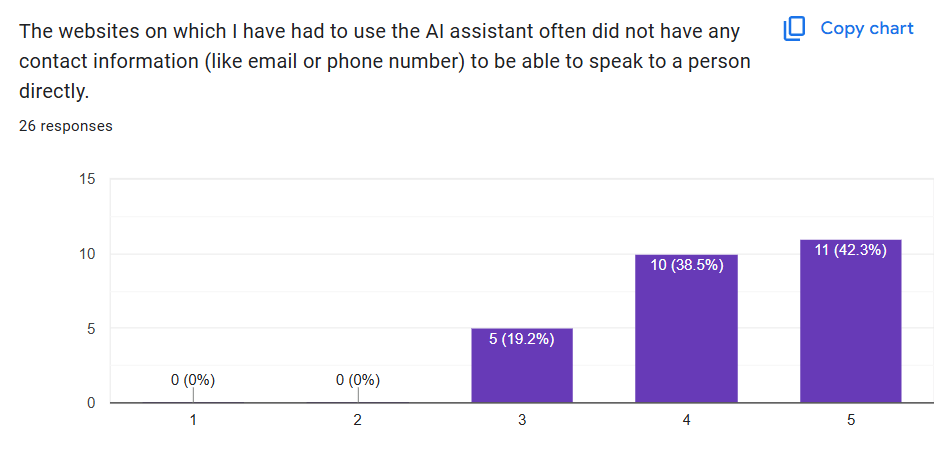

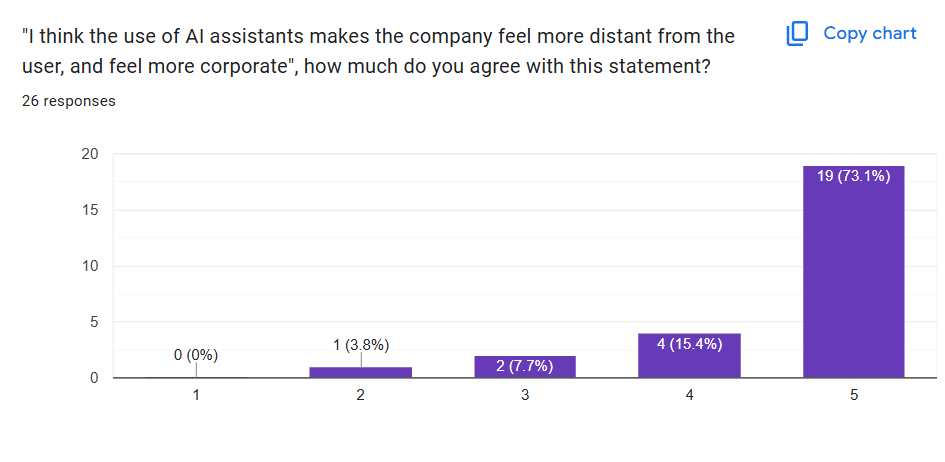

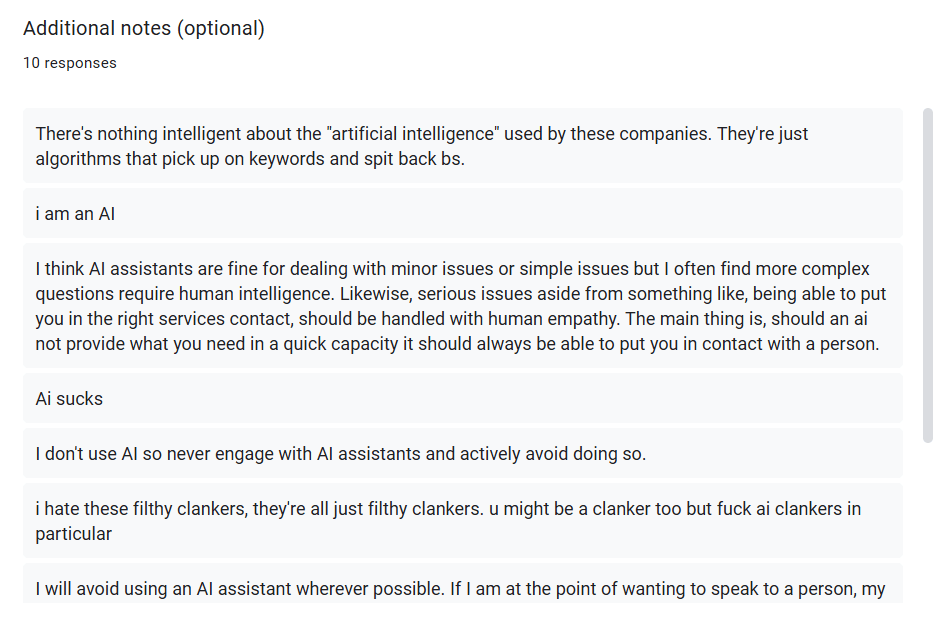

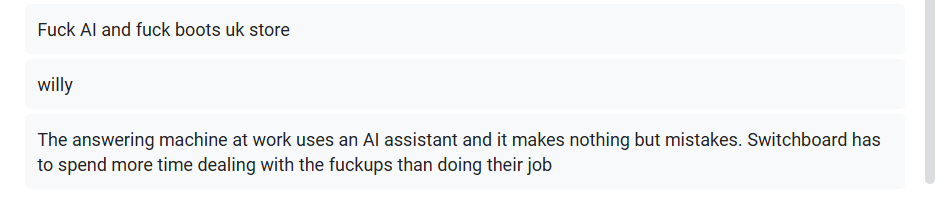

Almost half of information that AI presents is altered from the original source, and 20% of the time (or 1 in every 5 cases) it shares completely false information. Due to this I have decided to conduct my own survey. This survey is asking people about their experience with AI virtual assistants, a tool that many companies use in order to answer people faster, but would it integrate with the values that the Participatory Collective holds?

The participants of this survey range from 16-30 years old, and are asked to answer truthfully on a scale from 1 to 5, ranging from “strongly disagree” (1) to “strongly agree” (5).

In general, the response to AI assistants has been negative, with an overwhelming percentage of participants not trusting it to assist them. When dealing with something as fragile as mental health, false or unhelpful information from an AI assistant can worsen the wellbeing of the vulnerable user, as well as prove to them that they do not matter, since a real human being cannot be bothered to answer them directly.

The additional notes have also been mostly negative, whether it is critiquing the AI’s capabilities or passionately letting out their frustrations from previous experiences with AI assistants. This solidifies my decision of boycotting any use of AI for this project, as it will be highly beneficial for the client.

References:

Isba.org. (2018). Artificial Intelligence (AI) and Entertainment: How To Protect and Enforce Your Rights in the Digital Age of AI | Illinois State Bar Association. [online] Available at: https://www.isba.org/public/guide/ai-entertainment.

Bbc.co.uk. (2025). Largest study of its kind shows AI assistants misrepresent news content 45% of the time – regardless of language or territory. [online] Available at: https://www.bbc.co.uk/mediacentre/2025/new-ebu-research-ai-assistants-news-content.

Thubron, R. (2025). Call center workers say their AI assistants create more problems than they solve. [online] TechSpot. Available at: https://www.techspot.com/news/108547-call-center-workers-their-ai-assistants-create-more.html [Accessed 23 Nov. 2025].